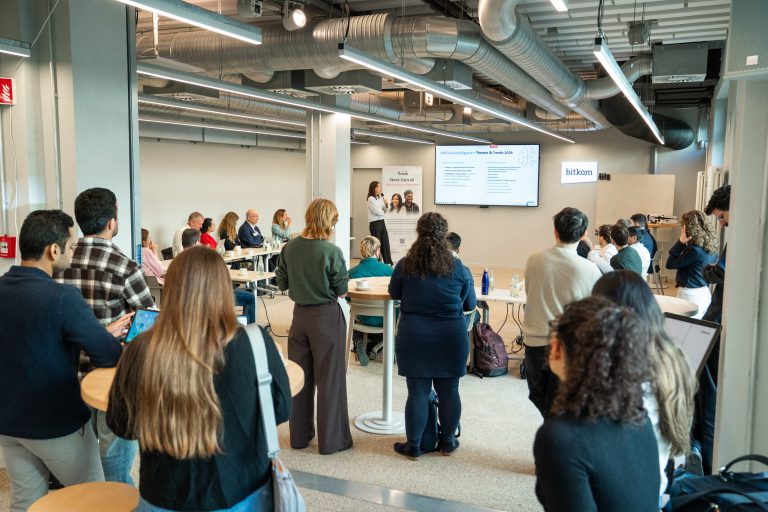

The aim of the meeting was to bring research and practice together, share perspectives, and collaboratively develop approaches for the responsible design of AI systems.

At the center of the event was the dialogue between early-career AI researchers and experts from industry: How can scientific insights on trustworthy AI be translated into real-world applications? And what challenges arise in practice during this process?

Focus: Security, Transparency, and Fairness

The discussions focused on three central dimensions of trustworthy AI: security, transparency, and fairness. Along these themes, participants explored how companies can responsibly develop and implement AI systems.

Particular attention was given to the transfer of scientific knowledge into business contexts. Many concepts related to explainable, fair, or secure AI originate in research. However, implementing these ideas in everyday corporate environments often presents organizations with new technical, organizational, and regulatory challenges.

Insights from Research and Practice

The event was opened by Lucy Czachowski, Head of AI & Cloud Policy at Bitkom, and Laure Poirson, Team Lead at AI Grid. Both emphasized the importance of close collaboration between research and industry—not only to discuss responsible AI, but also to implement it in practice.

This was followed by an online keynote from Ute Schmid, member of the AI Grid Executive Board and Professor of Cognitive Systems at the Otto-Friedrich University of Bamberg, titled “Framing Trustworthy & Responsible AI: Dimensions, Definitions, and Implications.” In her talk, she provided a conceptual overview of the field and highlighted current scientific debates around human-centered and explainable AI. Particular emphasis was placed on the implications of these approaches for the security, transparency, and fairness of AI systems.

Next, Manoj Kahdan, AI Grid alumnus and doctoral researcher at RWTH Aachen, delivered an impulse talk presenting practical approaches for implementing responsible AI within companies. He also discussed organizational challenges in establishing AI governance structures, as well as regulatory considerations—particularly in the context of the EU AI Act .

Three Tracks: From Use Cases to Actionable Recommendations

Following the introductory presentations, participants continued their work in three parallel tracks. The goal was to analyze concrete corporate applications and derive overarching recommendations for action.

At the beginning, the focus was on practical examples from various industries . The groups discussed specific use cases, examined the AI systems being used and their level of implementation, and exchanged experiences from real-world applications. Both technical aspects—such as data architectures, model integration, and toolchains—and organizational questions related to governance structures and internal processes were addressed.

After a joint lunch break, during which participants could exchange ideas in a relaxed atmosphere and establish new contacts, the discussions shifted toward the entire lifecycle of AI systems.Participants identified challenges ranging from data usage and model development to training processes, deployment, monitoring, and continuous quality control in production environments.

Expert Perspectives from Research and Industry

The discussions within the tracks were accompanied by experts from both academia and industry.

In the Fairness track, Rebekka Görge, Senior Data Scientist and PhD researcher at Fraunhofer IAIS, and Michael A. Hedderich, head of the research group for human-centered NLP and AI at LMU München and the Munich Center for Machine Learning, contributed current perspectives from research and AI assurance. Discussions focused in particular on how fairness can be integrated as a measurable quality criterion in development processes.

The Security & Privacy track included contributions from Paul Zenker, Deputy Manager for AI Security, Physical Assets, and IT at KPMG, who provided a practical perspective on security requirements for AI systems. The discussion was complemented by demonstrations of potential attack scenarios on large language models by AI Grid members Felix Mächtle and Jonas Sander, who presented risks such as LLM jailbreaking and possible countermeasures.

In the Explainability & Transparency track, Björn Weißhaupt, Director of Data Analytics Consulting at EPAM, shared insights from consulting work on real AI implementation projects. Academic perspectives on explainable AI were provided by Dr. Vera Schmitt, AI Grid mentor and head of the XplaiNLP research group at TU Berlin.

At the end of the event, representatives from research and industry presented the results of the three tracks in short pitch formats, summarizing the key insights from the discussions.

From Discussion to Guideline

The ideas and recommendations developed during the sessions were subsequently reflected upon and consolidated in a structured way. The goal was to develop a coherent overall perspective from the many viewpoints and derive concrete starting points for companies.

The results of the discussions will now be further developed and transformed into a praxisorientierten Leitfaden für Unternehmen überführt. Dieser soll Organisationen dabei unterstützen, vertrauenswürdige und verantwortungsvolle KI systematisch zu entwickeln und zu implementieren.

The final results and the completed guideline will be presented during an online event on May 7, 2026 .